I am not sure why but sometimes I am glutting for punishment. Maybe its why I try every backup and restore solution I can get my hands on? While Microsoft has done an amazing job at building the best relational database engine Azure Backup for SQL Server Virtual Machines has some architecture problems. In this post, I will showcase things you need to focus on, problems, and workarounds for your initial run with an Azure Backup for SQL Server VMs.

What’s Azure Backup for SQL Server Virtual Machines (VMs)?

If you take a look at Azure Backup they added functionality for backing up SQL Server databases inside an Azure VM. This seems like a really cool feature. Let’s use the same technology we use to backup our VM’s to also backup our databases. You know the whole one-stop-shop for your disaster recovery needs. Comes with built-in monitoring and it also eliminates the struggle some people have with setting up certificates, encryptions, purging old backups in blob storage, backups and restores from blob storage. It is really nice to also have a similar experience as restoring Azure SQL Databases as well.

Unfortunately, the product doesn’t work as expected at this point in time. I would expect any database backup tool to be able and backup the system databases by default without any customization. Therefore, Last night I setup my first Azure Backup for SQL Server Virtual Machines in the Backup Vault and this morning you can see my results below.

Now we will dig into concerns and initial problems with Azure Backup for SQL Server Virtual Machines (VMs).

Automatically Backup New Databases

Having the ability to backup new databases automatically is taken for granted. So much, that I noticed that Azure Backup for SQL Server VM’s will not automatically backup new databases for you. That’s right. Make sure you remember to go in and detect and select your new database every time you add a database or you will not be able to recover.

Azure Backup for SQL Server VM’s has an interesting feature called Autoprotect. This should automatically backup all your databases for you. Unfortunately, this does not work. Yes, I double-checked by enabling autoprotect for a VM and I added a new database. The database didn’t get backed up so I had to manually add the database.

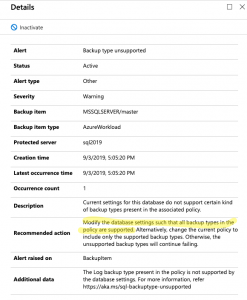

Simple Recovery Problems

Looking into the failures for my system database backups I noticed something interesting in the log for the master database. It looks like you will get errors with the only SQL Server backup policy created by default. The reason is the policy includes transactional log backups and as you know its impossible to take a transactional log backup if your database utilizes the simple recovery model. Now, most backup tools know how to roll with databases in simple and full recovery.

Looks like Azure Backup for SQL Server VM’s is not one of these tools that easily allow you to mix databases utilizing both simple and full recovery models.

So, how do we get around this? It is not too hard. Just create a new backup policy that does not include transactional log backups and assign it to your databases that utilize the simple recovery model.

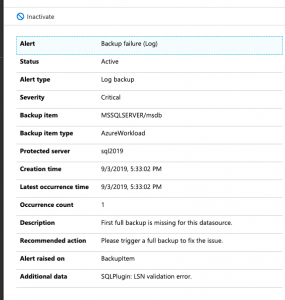

Transactional Log Backup Problems

So, what happens when you try to take a transactional log backup of a database that doesn’t have a full backup? It fails. This is by design. If you try to take a log backup in this scenario with T-SQL it will fail as well. That said, several 3rd Party open source backup solutions like my recommended one can gracefully handle this for you. It can take a full backup instead of the log backup. I have grown to expect this behavior.

Here is what you will see in the logs of Azure Backup for SQL Server VM’s.

So, the workaround here is simple. You can force a backup. This will start the process of allowing your schedule log backups to work as designed. You could also wait until the scheduled full backup runs but know this means you will not have point in time recovery until that full backup runs. There should be an option to perform a full backup instead of a transactional log backup if a full backup does not exist. This would prevent the transactional log scheduled backups from failing.

Things to Know!

Azure Backup for SQL Server VM’s pricing goes off of storage as well as instances of SQL. By default, compression is not used for the SQL Server Backups. You will most likely want to make sure you enable this to save some money.

Azure Backup for SQL Server VMs has a good amount of documentation for troubleshooting common problems.

There are many documented limitations that we didn’t cover in this blog post. Some shocking ones to me are SQL Server Failover Cluster Instances and don’t configure backup for more than 50 databases in one go